Lovely wedding gifts from Dr Clare…

Monthly Archives: December 2014

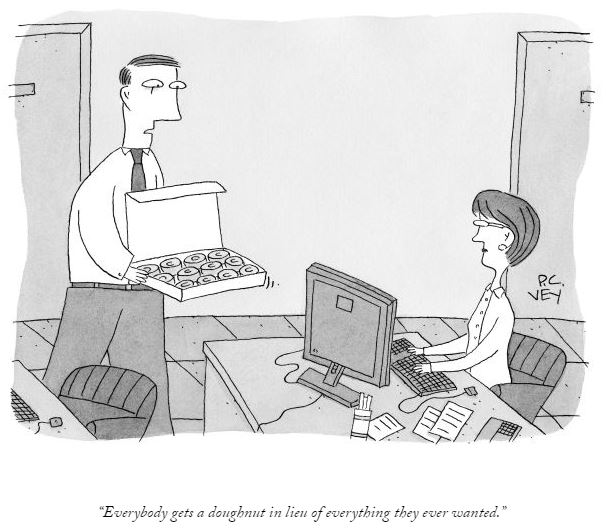

Everybody gets a doughnut in lieu of everything they’ve ever wanted

Pinata’s got the bat

Confirmation Bias

Surgical performance data released by NHS

encouraging progress from NHS…

http://www.telegraph.co.uk/health/nhs/11240241/PIC-AND-HOLD-Just-three-surgeons-named-as-having-high-death-rates.html

Just three surgeons have been named as performing more poorly than they should be under new data comparing the death rates of 5,000 surgeons in England.

Data published today on a central NHS website has been hailed as part of a “world leading transparency drive”.

The figures show that almost every surgeon in the country has been found to be operating within “the expected range” of performance.

NHS England said the findings should reassure the public.

But critics questioned whether the limits were set too widely, allowing surgeons to be labelled as “okay” when their performance was worryingly poor.

Just three surgeons were named today as “outliers” – meaning that their death rates were found to be significantly worse than average over the periods examined.

Dynesh Rittoo, a vascular surgeon at the Royal Bournemouth and Christchurch Hospitals foundation trust was found to have a risk-adjusted mortality rate of 10.4 per cent over three years for carotid endarterectomies – a procedure to reduce the risk of stroke. The national average for the same procedure was 2 per cent.

In a statement agreed by the consultant, the trust said: “The overall 3-year rate for Mr Rittoo reflects a series of strokes in 2011. The rate of strokes / deaths within 30 days for Mr Rittoo between October 2011 and September 2013 is 6.4 per cent, and this rate is consistent with the outcomes of other vascular surgeons.”

Jonathan Hyde, a heart surgeon at Royal Sussex County Hospital, was found to have a a risk-adjusted hospital mortality rate of 6.63 per cent over a three-year period in which he performed more than 500 operations on adults.

Mr Hyde said he had taken action to improve his mortality rates, with more recent figures suggesting a significant improvement.

He said: “The data shown reflect higher mortality rates from my practice predominantly in the years 2011 and 2012 and therefore refer to outcomes from more than 18 months ago.

“In the light of these outcomes, I have reviewed my practice in detail with the support of an Individual Review from the Royal College of Surgeons. The mortality for my surgery for the period April 2013 to October 2014 has been 1.8 per cent prior to any adjustment for individual patient risk.”

Jeff Garner, a colorectal surgeon at Rotherham NHS Foundation trust, with a mortality rate of 14 per cent for surgery over the 18 months examined. Of 50 patients treated by Mr Garner, six died.

The trust said: “This Trust has acknowledged its outlier status and that of one of its surgeons. It has confirmed that measures have been taken to improve outcomes with a comprehensive overhaul of the colorectal service in 2012-2013 and close scrutiny of any deaths to identify potential surgical or system failings. The cumulative nature of data reporting means that it is likely to be at least another year before the Trust and surgeon cease to be outliers.”

Roger Taylor, co-founder of data analysts Dr Foster, said it was “misleading” to suggest that there were only three surgeons in the country who were performing significantly worse than the rest.

“If you asked any surgeon whether they thought there were only three in the country who were significantly worse than the rest of them, I think they would laugh,” he said.

He criticised the way the analysis had been done, which he said appeared designed to hide poor performance

Mr Taylor said: “What is being said is that this will help people to identify good and poor performing individuals. This actually looks like it has been designed to avoid identifying good or poor outcomes.”

He said surgeons should not have been allowed to come up with their own methods to assess performance, which had set limits too broadly. He also said it would have been more sensible to examine performance over longer periods, where trends would be more likely to be revealed.

Professor Sir Bruce Keogh, NHS Medical Director said: “This represents another major step forward on the transparency journey. It will help drive up standards, and we are committed to expanding publication into other areas.”

“The results demonstrate that surgery in this country is as good as anywhere in the western world and, in some specialities, it is better. The surgical community in this country deserves a great deal of credit for being a world leader in this area.”

Amplio – surgeon score cards

https://medium.com/backchannel/should-surgeons-keep-score-8b3f890a7d4c

Making the Cut

Which surgeon you get matters — a lot. But how do we know who the good ones are?

“You can think of surgery as not really that different than golf.” Peter Scardino is the chief of surgery at Memorial Sloan Kettering Cancer Center (MSK). He has performed more than 4,000 open radical prostatectomies. “Very good athletes and intelligent people can be wildly different in their ability to drive or chip or putt. I think the same thing’s true in the operating room.”

The difference is that golfers keep score. Andrew Vickers, a biostatistician at MSK, would hear cancer surgeons at the hospital having heated debates about, say, how often they took out a patient’s whole kidney versus just a part of it. “Wait a minute,” he remembers thinking. “Don’t you know this?”

“How come they didn’t know this already?”

In the summer of 2009, he and Scardino teamed up to begin work on a software project, called Amplio (from the Latin for “to improve”), to give surgeons detailed feedback about their performance. The program—still in its early stages but already starting to be shared with other hospitals — started with a simple premise: the only way a surgeon is going to get better is if he knows where he stands.

Vickers likes to put it this way. His brother-in-law is a bond salesman, and you can ask him, How’d you do last week?, and he’ll tell you not just his own numbers, but the numbers for his whole group.

Why should it be any different when lives are in the balance?

The central technique of Amplio, using outcome data to determine which surgeons were more successful, and why, takes on a powerful taboo. Perhaps the longest-standing impediment to research into surgical outcomes — the reason that surgeons, unlike bond salesmen (or pilots or athletes), are so much in the dark about their own performance — are the surgeons themselves.

“Surgeons basically deeply believe that if I’m a well-trained surgeon, if I’ve gone through a good residency program, a fellowship program, and I’m board-certified, I can do an operation just as well as you can,” Scardino says. “And the difference between our results is really because I’m willing to take on the challenging patients.”

It is, maybe, a vestige of the old myth that anyone ordained to cut into healthy flesh is thereby made a minor god. It’s the belief that there are no differences in skill, and that even if there were differences, surgery is so complicated and multifaceted, and so much determined by the patient you happen to be operating on, that no one would ever be able to tell.

Vickers said to me that after several years of hearing this, he became so frustrated that he sat down with his ten-year-old daughter and conducted a little experiment. He searched YouTube for “radical prostatectomy” and found two clips, one from a highly respected surgeon and one from a surgeon who was rumored to be less skilled. He showed his daughter a 15second clip of each and asked, “Which one is better?”

“That one,” she replied right away.

When Vickers asked her why, “She looked at me, like, can’t you tell the difference? You can just see.”

A remarkable paper published last year in the New England Journal of Medicine showed that maybe Vickers’s daughter was onto something.

In the study, run by John Birkmeyer, a surgeon who at the time was at the University of Michigan, bariatric surgeons were recruited from around the state of Michigan to submit videos of themselves doing a gastric bypass operation. The videos were sent to another pool of bariatric surgeons to be given a series of 1-to-5 rating on factors such as “respect for tissue,” “time and motion,” “economy of movement” and “flow of operation.”

The study’s key finding was that not only could you reliably determine a surgeon’s skill by watching them on video — skill was nowhere near as nebulous as had been assumed — but that those ratings were highly correlated with outcomes: “As compared with patients treated by surgeons with high skill ratings, patients treated by surgeons with low skill ratings were at least twice as likely to die, have complications, undergo reoperation, and be readmitted after hospital discharge,” Birkmeyer and his colleagues wrote in the paper.

You can actually watch a couple of these videos yourself [see above]. Along with the overall study results, Birkmeyer published two short clips: one from a highly rated surgeon and one from a low-rated surgeon. The difference is astonishing.

You see the higher-rated surgeon first. It’s what you always imagined surgery might look like. The robot hands move with purpose — quick, deliberate strokes. There’s no wasted motion. When they grip or sew or staple tissue, it’s with a mix of command and gentle respect. The surgeon seems to know exactly what to do next. The way they’ve set things up makes it feel roomy in there, and tidy.

Watching the lower-rated surgeon, by contrast, is like watching the hidden camera footage of a nanny hitting your kid: it looks like abuse. The surgeon’s view is all muddled, they’re groping aimlessly at flesh, desperate to find purchase somewhere, or an orientation, as if their instruments are being thrashed around in the undertow of the patient’s guts. It’s like watching middle schoolers play soccer: the game seems to make no sense, to have no plot or direction or purpose or boundary. It’s not, in other words, like, “This one’s hands are a bit shaky,” it’s more like, “Does this one have any clue what they’re doing?”

It’s funny: in other disciplines we reserve the word “surgical” for feats that took a special poise, a kind of deftness under pressure. But the thing we maybe forget is that not all surgery is worthy of the name.

Vickers is best known for showing exactly how much variety there is, plotting, in 2007, the so-called “learning curve” for surgery: a graph that tracks, on one axis, the number of cases a surgeon has under his belt, and on the other, his recurrence rates (the rate at which his patients’ cancer comes back).

He showed that in incidents of prostate cancer that haven’t spread beyond the prostate — so-called ‘organ-confined’ cases — the recurrence rates for a novice surgeon were 10 to 15%. For an experienced surgeon, they were less than 1%. With recurrence rates so low for the most experienced surgeons, Vickers was able to conclude that in organ-confined cancer cases, the onlyreason a patient would recur is “because the surgeon screwed up.”

There’s a large literature, going back to a famous paper in 1979, finding that hospitals with higher volumes of a given surgical procedure have better outcomes. In the ’79 study it was reported that for some kinds of surgery, hospitals that saw 200 or more cases per year had death rates that were 25% to 41% lower than hospitals with lower volumes. If every case were treated at a high-volume hospital, you would avoid more than a third of the deaths associated with the procedure.

But what wasn’t clear was why higher volumes led to better outcomes. And for decades, researchers penned more than 300 studies restating the same basic relationship, without getting any closer to explaining it. Did low-volume hospitals end up with the riskiest patients? Did high-volume hospitals have fancier equipment? Or better operating room teams? A better overall staff? An editorial as late as 2003 summarized the literature with the title, “The Volume–Outcome Conundrum.”

A 2003 paper by Birkmeyer, “Surgeon volume and operative mortality in the United States,” was the first to offer definitive evidence that the biggest factor determining the outcome of many surgical procedures — the hidden element that explained most of the variation among hospitals — was the procedure volume not of the hospital, but of the individual surgeons.

“In general I don’t think anyone was surprised that there was a learning curve,” Vickers says. “I think they were surprised at what a big difference it made.” Surprised, maybe, but not moved to action. “You may think that everyone would drop what they were doing,” he says, “and try and work out what it is that some surgeons are doing that the other ones aren’t… But things move a lot more slowly than that.”

Tired of waiting, Vickers started sharing some initial ideas with Scardino about the program that would become Amplio. It would give surgeons detailed feedback about their performance. It would show you not just your own results, but the results for everyone in your service. If another surgeon was doing particularly well, you could find out what accounted for the difference; if your own numbers dropped, you’d know to make an adjustment. Vickers explains that they wanted to “stop doing studies showing surgeons had different outcomes.”

“Let’s do something about it,” he told Scardino.

The first time I heard about Amplio was on the third floor of the Chrysler Building, in a room they called the Innovation Lab — the very room you’d point to if the Martians ever asked you what a 125-year old bureaucracy looks like. As I arrived, the receptionist was trying to straighten up a small mess of papers, post-its, cookies, and coffee stirrers. “The last crowd had a wild time,” she said. Every surface in the room was gray or off-white, the color of questionable eggs. It smelled like hospital-grade hand soap.

The people who filed in, though, and introduced themselves to each other (this was a summit of sorts, a “Collaboration Meeting” where different research groups from around MSK shared their works in progress) looked straight out of a well-funded biotech startup. There was a Fulbright scholar; a double-major in biology and philosophy; a couple of epidemiologists; a mathematician; a master’s in biostats and predictive analytics. There were Harvards, Cals, and Columbias, bright-eyed and sharply dressed.

Vickers was one of the speakers. He’s in his forties but he looks younger, less like an academic than a seasoned ski instructor, a consequence, maybe, of the long wavy hair, or the well-worn smile lines around his eyes, or this expression he has that’s like a mix of relaxed and impish. He leans back when he talks, and he talks well, and you get the sense that he knows he talks well. He’s British, from north London, educated first at Cambridge and then, for his PhD in clinical medicine, at Oxford.

The first big task with Amplio, he said, was to get the data. In order for surgeons to improve, they have to know how well they’re doing. In order to know how well they’re doing, they have to know how well their patients are doing. And this turns out to be trickier than you’d think. You need an apparatus that not only keeps meticulous records, but keeps them consistently, and throughout the entire life cycle of the patient.

That is, you need data on the patient before the operation: How old are they? What medications are they allergic to? Have they been in surgery before? You need data on what happened during the operation: where’d you make your incisions? how much blood was lost? how long did it take?

And finally, you need data on what happened to the patient after the operation — in some cases years after. In many hospitals, followup is sporadic at best. So before the Amplio team did anything fancy, they had to devise a better way to collect data from patients. They had to do stuff like find out whether it was better to give the patient a survey before or after a consultation with their surgeon? And what kinds of questions worked best? And who were they supposed to hand the iPad to when they were done?

Only when all these questions were answered, and a stream of regular data was being saved for every procedure, could Amplio start presenting something for surgeons to use.

After years of setup, Amplio now is in a state where it can begin to affect procedures. The way it works is that a surgeon logs into a screen that shows where they stand on a series of plots. On each plot there’s a single red dot sitting amid some blue dots. The red dot shows your outcomes; the blue dots show the outcomes for each of the other surgeons in your group.

You can slice and dice different things you’re interested in to make different kinds of plots. One plot might show the average amount of blood lost during the operation against the average length of the hospital stay after it. Another plot might show a prostate patient’s recurrence rates against his continence or erectile function.

There’s something powerful about having outcomes graphed so starkly. Vickers says that there was a surgeon who saw that they were so far into the wrong corner of that plot — patients weren’t recovering well, and the cancer was coming back — that they decided to stop doing the procedure. The men spared poor outcomes by this decision will never know that Amplio saved them.

It’s like an analytics dashboard, or a leaderboard, or a report card, or… well, it’s like a lot of things that have existed in a lot of other fields for a long time. And it kind of makes you wonder, why has it taken so long for a tool like this to come to surgeons?

The answer is that Amplio has cleverly avoided the pitfalls of some previous efforts. For instance, in 1989, New York state began publicly reporting the mortality rates of cardiovascular surgeons. Because the data was “risk-adjusted”—an unfavorable outcome would be considered less bad, or not counted at all, if the patient was at risk to begin with — surgeons started pretending their patients were a lot worse off than they were. In some cases, they avoided patients who looked like goners. “The sickest patients weren’t being treated,” Vickers says. One investigation into why mortality in New York had dropped for a certain procedure, the coronary artery bypass graft, concluded that it was just because New York hospitals were sending the highest-risk patients to Ohio.

Vickers wanted to resist such gaming. But the answer is not to quit adjusting for patient risk. After all, if a given report says that your patients have 60% fewer complications than mine, does that mean that you’re a 60% better surgeon? It depends on the patients we see. It turns out that maybe the best way to prevent gaming is just to keep the results confidential. That sounds counter to a patient’s interests, but it’s been shown that patients actually make little use of objective outcomes data when it’s available, that in fact they’re much more likely to choose a surgeon or hospital based on reputation or raw proximity.

With Amplio, since patients, and the hospital, and even your boss are blinded from knowing whose results belong to whom, there’s no incentive to fudge risk factors or insist that a risk factor’s weight be changed, unless you think it’s actually good for the analysis.

That’s why Amplio’s interface for slicing and dicing the data in multiple ways matters, too. Feedback systems in the past that have given surgeons a single-dimensional report — say, they only track recurrence rates — have failed by creating a perverse incentive to optimize along just that one dimension, at the expense of all the others. Another reminder that feedback is, like surgery itself, fraught with complication: if you do it wrong, it can be worse than useless.

Every member of the Amplio team I spoke to stressed this point over and over again, that the system had been painstakingly built from the “bottom up” — tuned via detailed conversations with surgeons (“Are you accounting for BMI? What if we change the definition of blood loss?”) — so that the numbers it reported would be accurate, and risk-adjusted, and multidimensional, and credible. Because only then would they be actionable.

Karim Touijer, a surgeon at MSK who has used Amplio, explains the system’s chief benefit is the fact that you can vividly see how you’re doing, and that someone else is doing better. “When you set a standard,” he says, “the majority of people will improve or meet that standard. You tend to shrink the outliers. If I’m an outlier, if my performance leaves something to be desired, then I can go to my colleagues and say what is it that you’re doing to get these results?” Touijer sees this as the gradual standardization of surgery: you find the best performers, figure out what makes them good, and spread the word. He said that already within his group, because the conversations are more tied to outcomes, they’re talking about technique in a more objective way.

In fact, he says, as a result of Amplio he and his team have devised the first randomized clinical trial that is solely dedicated to surgical maneuvers.

Touijer specializes in the radical prostatectomy, considered one of the most complex and delicate operations in all of surgical practice. The procedure — in which a patient’s cancerous prostate is entirely removed — is highly sensitive to an individual surgeon’s skill. The reason is that the cancer ends up being very close to the nerves that control sexual and urinary function. It’s an operation unlike, say, kidney cancer, where you can easily go widely around the cancer. If you operate too far around the prostate, you could easily damage the rectum, the bladder, the nerves responsible for erection, or the sphincter responsible for urinary control. “It turns out that radical prostatectomy is very, very intimately influenced by surgical technique,” Touijer says. “One millimeter on one side or less than a millimeter on the other can change the outcome.”

There’s a moment during the procedure where the surgeon has to decide whether to make a particular stitch. Some surgeons do it, some don’t; we don’t yet know which way is better. In the randomized trial, if the surgeon doesn’t have a compelling reason to pick one of the two alternatives, he lets the computer decide randomly for him. With enough patients, it should be possible to isolate the effect of that one decision, and to find out whether the extra stitch leads to better outcomes. The beauty is, since the outcomes data was already being tracked, and the patients were already going to have the surgery, the trial costs almost nothing.

If you’ve worked on the web, this model of rapid, cheap experimentation probably sounds familiar: what Touijer is describing is the first A/B test for surgery. As it turns out this particular test didn’t yield significant results. But several other tests are in the works, and some may improve some specific surgical techniques—improving the odds for all patients.

In Better, Atul Gawande argues that when we think of improving medicine, we always imagine making new advances, discovering the gene responsible for a disease, and so on — and forget that we can simply take what we already know how to do, and figure out how to do it better. In a word, iterate.

“But to do that,” Scardino says, “we have to measure it, we have to know what the results are.”

Scardino describes how when laparascopy was first becoming an option for radical prostatectomy, there was a lot of hype. “The company and many doctors who were doing it immediately claimed that it was safer, had better results, was more likely to cure the cancer and less likely to have permanent urinary or sexual problems.” But, he says, the data to support it were weak, and biased. “We could see in Amplio early on that as people started doing robotic surgery, the results were clearly worse.” It took time for them to hit par with the traditional open procedure; it took time for them to get better.

After a pilot among prostate surgeons, Amplio spread quickly to other services within MSK, including for kidney cancer, bladder cancer and colorectal cancer. Vickers’s team has been working with other hospitals — including Columbia in New York, the Barbara Ann Karmanos Cancer Institute in Michigan, and the MD Anderson Cancer Center in Texas — to slowly begin integrating with their systems. But it’s still early days: even within their own hospital, surgeons were wary of Amplio. It took many conversations, and assurances, to convince them that the data were being collected for their benefit — not to “name and shame” bad performers.

We know what happens when performance feedback goes awry — similar efforts to “grade” American schoolteachers, for instance, have perhaps generated more controversy than results. To do performance feedback well requires patience, and tact, and an earnest imperative to improve everyone’s results, not just to find the negative outliers. But Vickers believes that enough surgeons have signed on that the taboo has been broken at MSK. And results are bound to flow from that.

It’s all about trust. Remember the Birkmeyer study that compared surgeons using videos? It was only possible because Birkmeyer had built up relationships by way of a previous outcomes experiment in Michigan that meticulously protected data. “That’s a question that we get really frequently,” Birkmeyer told me when we spoke about the paper. “How on earth did we ever pull that study off?” The key, he says, is that years of research with these surgeons had slowly built goodwill. When it came time to make a big ask, “the surgeons were at a place where they could trust that we weren’t gonna screw them.”

Amplio will no doubt have to be able to say the same thing, if it’s to spread beyond the country’s best research cancer centers into the average regional hospital.

In 1914, a surgeon at Mass General got so fed up with the administration, and their refusal to measure outcomes, that he created his own private hospital, “the End Result Hospital,” where detailed records were to be kept of every patient’s “end results.” He published the first five years of his hospital’s cases in a book that became one of the founding documents of evidence-based medicine.

“The Idea is so simple as to seem childlike,” he wrote, “but we find it ignored in all Charitable Hospitals, and very largely in Private Hospitals. It is simply to follow the natural series of questions which any one asks in an individual case: What was the matter? Did they find it out beforehand? Did the patient get entirely well? If not — why not? Was it the fault of the surgeon, the disease, or the patient? What can we do to prevent similar failures in the future?”

It might finally be time for that simple, “childlike” concept to reach fruition. It’s like Vickers said to me one night in early November, as we were discussing Amplio, “Having been in health research for twenty years, there’s always that great quote of Martin Luther King: The arc of history is long, but it bends towards justice.”

How Medical Care Is Being Corrupted

http://www.nytimes.com/2014/11/19/opinion/how-medical-care-is-being-corrupted.html?_r=0

WHEN we are patients, we want our doctors to make recommendations that are in our best interests as individuals. As physicians, we strive to do the same for our patients.

But financial forces largely hidden from the public are beginning to corrupt care and undermine the bond of trust between doctors and patients. Insurers, hospital networks and regulatory groups have put in place both rewards and punishments that can powerfully influence your doctor’s decisions.

Contracts for medical care that incorporate “pay for performance” direct physicians to meet strict metrics for testing and treatment. These metrics are population-based and generic, and do not take into account the individual characteristics and preferences of the patient or differing expert opinions on optimal practice.

For example, doctors are rewarded for keeping their patients’ cholesterol and blood pressure below certain target levels. For some patients, this is good medicine, but for others the benefits may not outweigh the risks. Treatment with drugs such as statins can cause significant side effects, including muscle pain and increased risk of diabetes. Blood-pressure therapy to meet an imposed target may lead to increased falls and fractures in older patients.

Physicians who meet their designated targets are not only rewarded with a bonus from the insurer but are also given high ratings on insurer websites. Physicians who deviate from such metrics are financially penalized through lower payments and are publicly shamed, listed on insurer websites in a lower tier. Further, their patients may be required to pay higher co-payments.

These measures are clearly designed to coerce physicians to comply with the metrics. Thus doctors may feel pressured to withhold treatment that they feel is required or feel forced to recommend treatment whose risks may outweigh benefits.

It is not just treatment targets but also the particular medications to be used that are now often dictated by insurers. Commonly this is done by assigning a larger co-payment to certain drugs, a negative incentive for patients to choose higher-cost medications. But now some insurers are offering a positive financial incentive directly to physicians to use specific medications. For example, WellPoint, one of the largest private payers for health care, recently outlined designated treatment pathways for cancer and announced that it would pay physicians an incentive of $350 per month per patient treated on the designated pathway.

This has raised concern in the oncology community because there is considerable debate among experts about what is optimal. Dr. Margaret A. Tempero of the National Comprehensive Cancer Network observed that every day oncologists saw patients for whom deviation from treatment guidelines made sense: “Will oncologists be reluctant to make these decisions because of an adverse effects on payments?” Further, some health care networks limit the ability of a patient to get a second opinion by going outside the network. The patient is financially penalized with large co-payments or no coverage at all. Additionally, the physician who refers the patient out of network risks censure from the network administration.

When a patient asks “Is this treatment right for me?” the doctor faces a potential moral dilemma. How should he answer if the response is to his personal detriment? Some health policy experts suggest that there is no moral dilemma. They argue that it is obsolete for the doctor to approach each patient strictly as an individual; medical decisions should be made on the basis of what is best for the population as a whole.

We fear this approach can dangerously lead to “moral licensing” — the physician is able to rationalize forcing or withholding treatment, regardless of clinical judgment or patient preference, as acceptable for the good of the population.

Medicine has been appropriately criticized for its past paternalism, where doctors imposed their views on the patient. In recent years, however, the balance of power has shifted away from the physician to the patient, in large part because of access to clinical information on the web.

In truth, the power belongs to the insurers and regulators that control payment. There is now a new paternalism, largely invisible to the public, diminishing the autonomy of both doctor and patient.

In 2010, Congress passed the Physician Payments Sunshine Act to address potential conflicts of interest by making physician financial ties to pharmaceutical and device companies public on a federal website. We propose a similar public website to reveal the hidden coercive forces that may specify treatments and limit choices through pressures on the doctor.

Medical care is not just another marketplace commodity. Physicians should never have an incentive to override the best interests of their patients.

How to Make Health Care Accountable When We Don’t Know What Works

https://hbr.org/2014/11/how-to-make-health-care-accountable-when-we-dont-know-what-works

How to Make Health Care Accountable When We Don’t Know What Works

Accountable care organizations (ACOs) are widely regarded as part of the solution to a fragmented health care system — one plagued by duplicative services, avoidable errors, and other impediments to efficiency and quality. But 20 years of reform efforts have led to a wave of provider consolidation that has made little headway in efficiently coordinating care. Providers continue to follow a strategy that has shown minimal evidence of success.

We should admit that we don’t know what works and, instead, test a variety of potential solutions that could address fragmentation. Before I explore the concrete steps we can take to encourage that kind of innovation, let me provide some important historical context.

Payment Reform’s First Life

Early efforts to promote coordinated care emphasized payment reform. Toward that end, managed-care and health maintenance organizations used payment schedules and gatekeeper physicians to create provider networks. In addition, the Clinton administration introduced proposals to implement “pay for performance” and dedicated quality-improvement initiatives, suggesting that financial pressures might force the coordination and rationalization of care. But Congress rejected payment-focused reform, and market preferences eliminated managed-care pressures.

Commentators then suggested that payment reform could happen only in conjunction with provider-based reforms, and the Institute of Medicine later issued a series of reports calling for pairing payment solutions with structural reform. Then, when the Affordable Care Act instituted Medicare’s Shared Savings Program in 2010, it invited providers to create ACOs and to accept changes in reimbursement that allowed them recoup part of any savings they generated. Eventually, however, prospective ACOs were given the option ofcontinuing under Medicare’s traditional fee-for-service payments. In other words, providers were encouraged to pursue structural reform while being permitted to avoid any constraint from payment reform.

INSIGHT CENTER

-

Innovating for Value in Health Care

SPONSORED BY MEDTRONICA collaboration of the editors of Harvard Business Review and the New England Journal of Medicine, exploring best practices for improving patient outcomes while reducing costs.

The Disappointments of Provider Reform

The continued failure of payment-driven reform has sadly given provider-based reform a blank check. The U.S. health sector has been in a merger-and-acquisition frenzy for nearly 20 years, and much of the integration has been justified as an effort to construct ACOs. Buzz phrases such as “clinical integration” and “eliminating fragmentation” are routinely paraded before regulators who scrutinize proposed mergers.

The problem, of course, is that after waves of acquisitions, most hospital markets are now highly concentrated and lack meaningful competition. And, consistent with basic economic theory, hospital systems that acquired dominant market shares dramatically increased prices for health care services. Perhaps even worse is that these large entities have shown little capacity for achieving the efficiencies they promised through coordinated care. Newly integrated delivery systems retain their inefficiencies and bring higher prices without any evident reduction in costs or errors.

We don’t know exactly why efforts at integration have not yielded efficiencies, and it seems we simply didn’t think very hard about it. The health reform debate focused primarily on a handful of success stories we all can repeat in our sleep: Kaiser, Geisinger, Intermountain. The plan was to have other hospital systems mimic them. That is like instructing all high-tech companies to mimic Apple, as if what makes Apple successful is an easy-to-follow cookbook for large-scale structural change.

It is a curiosity about the U.S. health system that producers with better outcomes and lower costs than their competitors cannot dominate the market. Kaiser, for example, has tried but failed to enter more local markets. But it is foolhardy to think that the systems that have not achieved Kaiser’s success can replicate it simply with the help of government regulators. This duplication strategy at best seems mindless, and at worst smacks of a Khrushchev-era economic policy.

The truth is, despite a glut of business press and how-to manuals, we still understand very little about why certain organizations succeed and others do not. With all the complexities of delivering medical care, we should expect even more variation among health care providers than among manufacturers. We likewise should be very hesitant to claim we understand what works and prescribe nationwide structural reforms.

Concrete Steps for the Future of ACOs

Precisely because we don’t know what works at this juncture, we cannot continue encouraging the formation of vast integrated systems that are difficult to disentangle. Until we have more evidence that integration yields efficiencies, regulators should continue to halt mergers that harm competition.

But scrutinizing mergers will only prevent further damage. We also must improve our delivery system, and we cannot give up on the ACO as a potential source of innovative configurations. Specifically, we should:

- Redefine and broaden our concept of an ACO. Too much ACO formation has emphasized linking hospitals with other providers. Instead of this top-down approach, we should work from the bottom up by linking providers withconsumers and payors, so that the focus is on serving patients’ needs and managing budgets.

- Encourage nontraditional parties — such as social workers, professionals who help people navigate the health care system (often called “navigators”), and IT companies — to lead efforts at ACO formation. These parties would be well equipped to construct networks that provide accountability, given their expertise in connecting consumers to complex organizations and advocating on behalf of those consumers.

- Use contract-based and virtual provider collaborations instead of relying on mergers. Joining providers under common ownership might not be necessary. Electronic health records (EHRs) and other information technologies have the potential to create platforms that enable coordination without incurring the high costs of integration. EHR tools can also allow patients to control their own information and tailor collaborations to individual patients’ needs.

- Entertain disruptively innovative reconstructions of the health care delivery system — ones that make use of mobile health, medical tourism, and informatics. Many technology companies that traditionally have not participated in the health sector are now offering improvements to our delivery system. Because business scholarship tells us that outsiders frequently introduce the most valuable innovations to a market, we should ensure that regulatory barriers do not preclude participation from unconventional participants.

- Perhaps most important, we cannot pursue structural reform withoutpayment reform. We will distinguish valuable provider reforms from ineffective ones only if sustained revenue pressures force ACOs to be truly “accountable” to consumer demands and other economic realities.

Not every solution we try will work, but we’re likely to have more success letting providers figure out what works than telling them how to do it.